My role

Lead UX Designer

Company

Amazon

Scope

AI-assisted product discovery and recommendation systems

Impact

$20m reduced costs

Products such as mattresses, large appliances, and furniture involve extended research cycles. Customers compare materials, read reviews, watch videos, and often discuss options with a partner before purchasing.

Returns in these categories carry significant operational cost. Once used, many of these products cannot be resold. Reverse logistics, disposal rules, and refurbishment add additional expense. Service misunderstandings can also create operational friction. Customers sometimes cancel delivery or setup appointments when expectations around installation, haul-away, or access requirements are unclear.

While working in this category, I observed that many customer questions were not simple product discovery questions. Shoppers were trying to navigate trade-offs across product attributes, personal preferences, and operational constraints.

At the same time, early AI shopping experiences revealed another pattern. Many customers treated conversational AI like a search engine, submitting very short queries rather than exploring their full set of constraints. This limited the system’s ability to guide complex decisions.

These purchases are high-stakes. Customers balance comfort, cost, compatibility, and delivery logistics while trying to avoid an expensive mistake. For the business, improving these decisions can influence return rates, service cancellations, and long-term trust in AI-assisted shopping.

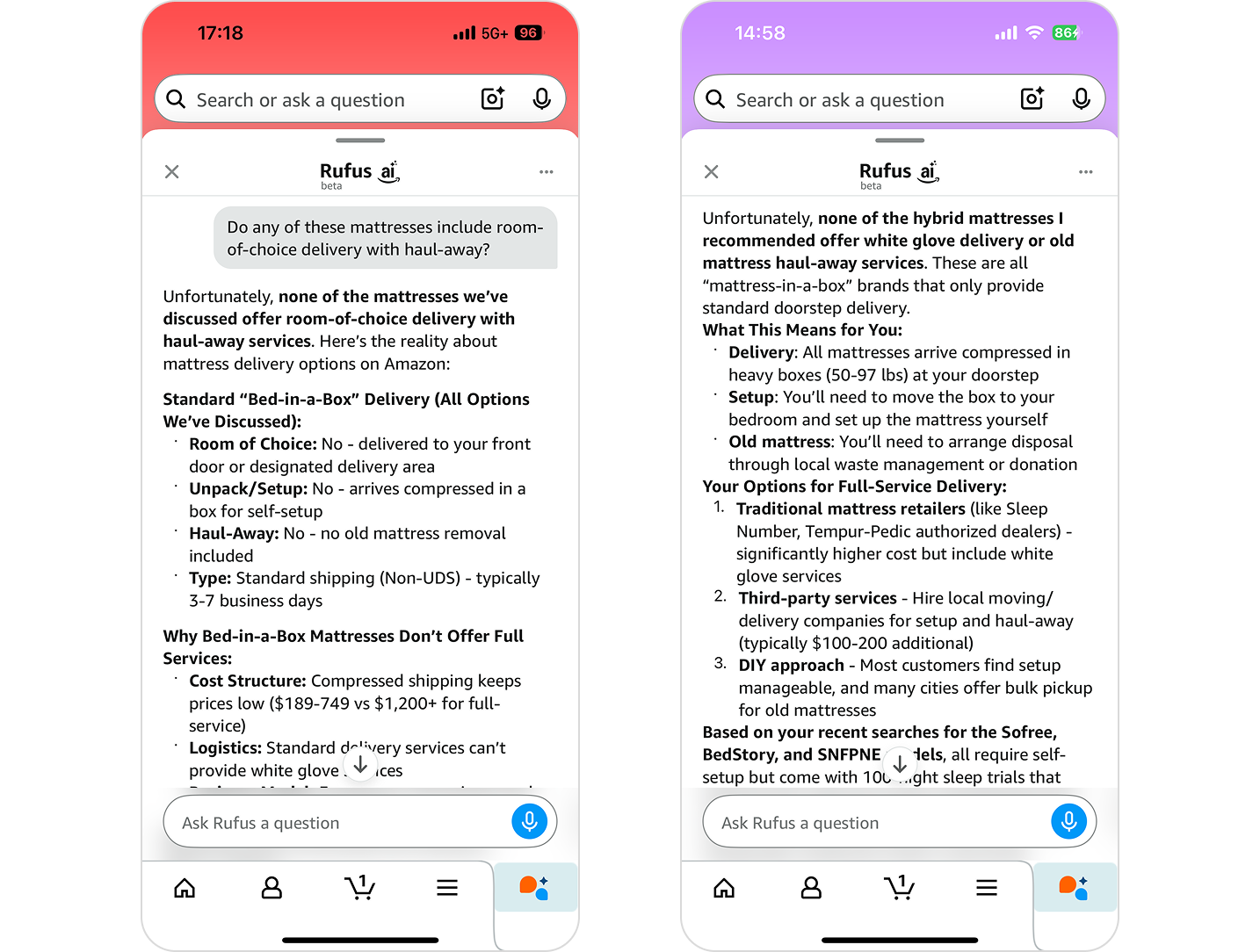

During early testing of the current CX I asked the system a simple question:

Do these mattresses include room-of-choice delivery, in-home setup, or haul-away service?

The language model responded confidently that Amazon does not offer these services and suggested looking elsewhere.

The response sounded plausible but was incorrect. In production systems, errors like this are not trivial—they create real business risk by misrepresenting service availability, undermining partner visibility, eroding customer trust, and potentially exposing the platform to compliance or contractual risk.

The model relied on general retail patterns from training data rather than evaluating structured service metadata tied to the product and the customer’s location.

Without grounding in structured data, the system could produce fluent answers that were not aligned with actual product or service availability.

As I worked on the problem, I noticed that many teams approached generative AI using the same methods used for traditional interface design.

Design workflows were built around deterministic systems. User flows defined the path through an interface, states were predictable, and edge cases could be enumerated.

When a user interacts with a traditional UI component, the system produces a defined result.

Generative models behave differently. Responses are produced dynamically and the same input can produce slightly different outputs. Trade-offs often occur inside the model rather than through explicit logic.

Early design artifacts reflected these traditional assumptions. Static mockups described conversational layouts and tone guidelines, but they did not define how the system should evaluate competing constraints or determine which outcome should be recommended.

The interface was defined. The reasoning process was not.

This gap became clear during early testing when the system generated plausible but incorrect answers about delivery services.

To address this, I shifted the design focus away from interface states and toward the structure of the decision process itself.

These inputs frequently conflict. A recommendation engine must reconcile these variables while accounting for operational constraints such as service eligibility and geographic coverage.

Language models can summarize these factors but they do not consistently enforce them when generating answers.

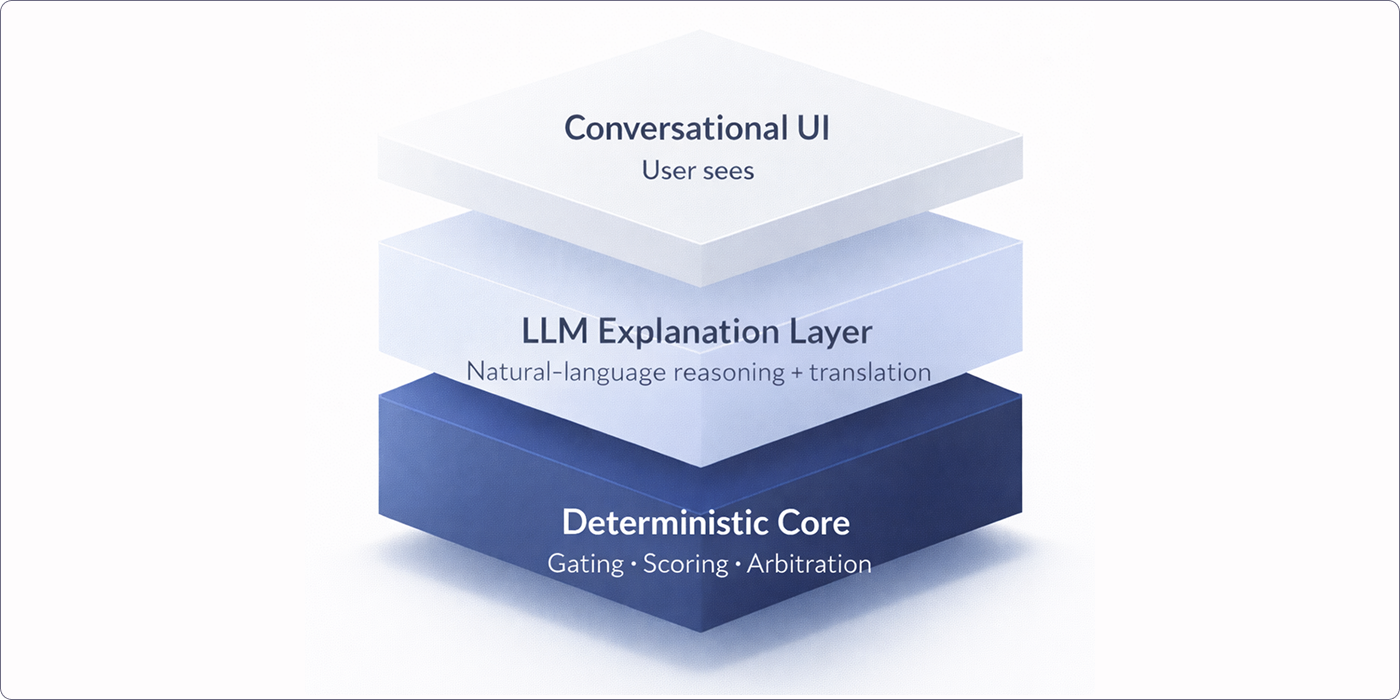

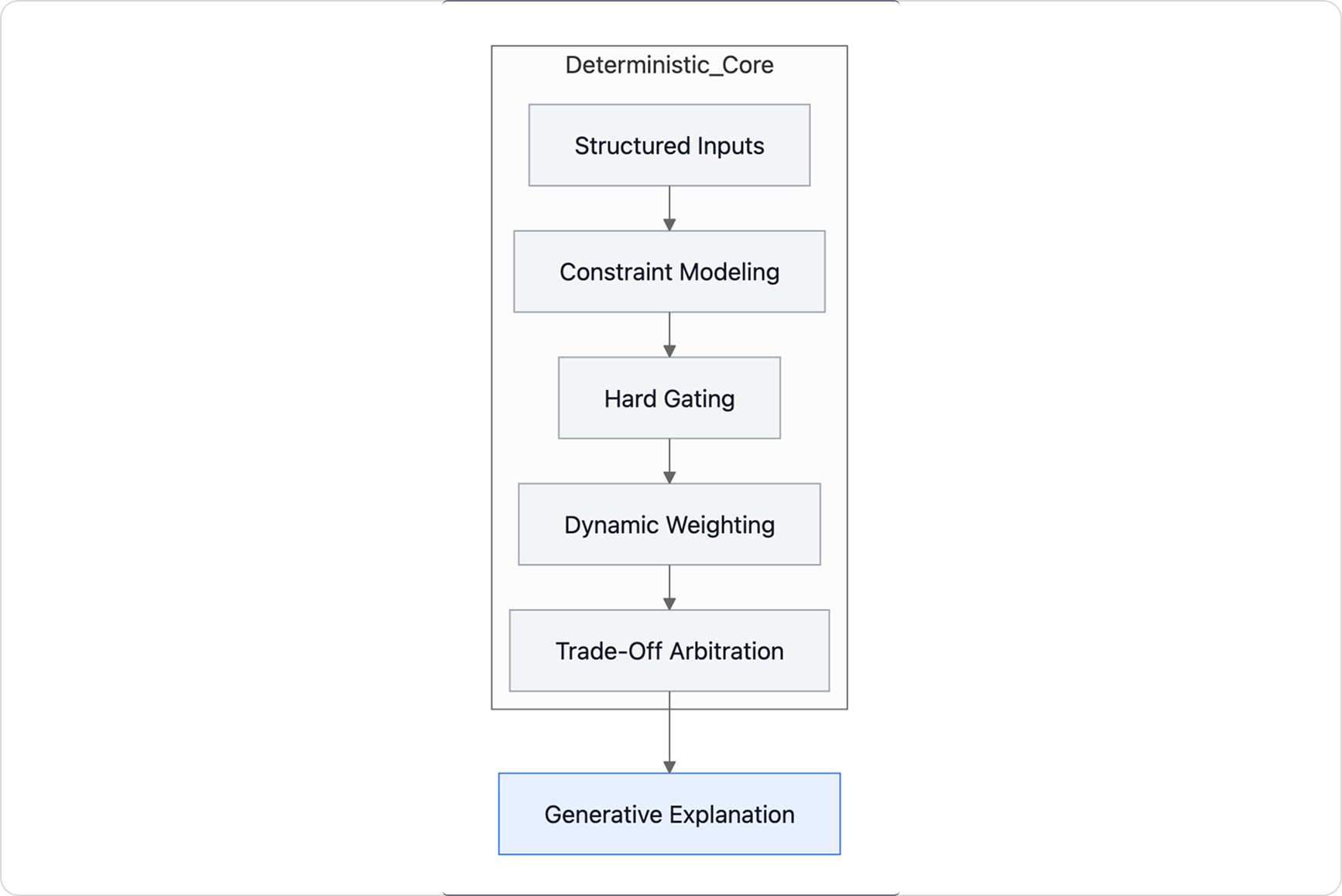

To address these issues, I designed an architecture that introduced a structured decision layer before language generation.

The decision layer evaluates constraints using product metadata, customer preferences, and operational rules. It filters invalid options, checks geographic service eligibility, and ranks candidates according to user preferences.

In this architecture, language generation communicates the result of the evaluation rather than determining it.

Traditional UX artifacts represent fixed interface states. In this project, system behavior depended on combinations of user inputs and operational constraints.

Recommendation outcomes changed depending on combinations of:

Representing these variations through static screens would have required many permutations without showing how the system actually behaved.

Instead of producing a large set of interface mocks, I built a working prototype.

This allowed me to test how the decision layer behaved under real inputs, observe how constraints filtered candidate products, and evaluate how different preference weightings changed the outcome.

The prototype made the decision logic visible and testable, which helped engineering and ML partners understand how the system should operate.

I built a prototype that combined structured product attributes, deterministic scoring logic, and live language model calls for explanation.

This allowed real user queries to pass through the system while exposing the underlying decision logic.

During testing, participants could interact with the system in a way that closely resembled a real AI-assisted shopping experience. Instead of reacting to static mockups, users were able to ask questions, adjust constraints, and see how recommendations changed in response.

Instead of reacting to static mockups, users were able to ask questions, adjust constraints, and see how recommendations changed in response.

This approach made it possible to observe:

Testing the live prototype produced much richer feedback than a traditional mock-based usability session. Participants were responding to the system’s behavior rather than imagining how it might work.

The prototype also became a shared artifact across design, engineering, and ML teams, helping everyone understand how the decision layer should behave under real inputs.

During validation sessions I observed a consistent pattern.

This allowed real user queries to pass through the system while exposing the underlying decision logic.

Participants expressed greater confidence when recommendations consistently referenced specific product attributes and constraints. They also asked fewer follow-up questions when the system described the trade-offs involved in the recommendation.

Users wanted visibility into why a product was selected and how competing preferences were balanced, and they wanted consistent recommendations personalized to their needs.

Through prototype testing and early deployment work, I observed improvements across AI system design, customer behavior, and operational metrics. Exact figures cannot be shared due to internal reporting constraints.

Organizational Impact

Product Impact

User Experience Impact

Although I developed this approach while working on mattress shopping, the same decision layer pattern appears in many high-stakes decision environments.

Examples include:

This project began as an effort to design a more capable conversational shopping assistant. As I explored the problem, the work shifted toward defining how the system computes decisions before presenting them conversationally.

As AI systems move closer to consequential decisions, product design increasingly involves shaping how outcomes are computed, not just how they are presented.

A longer write-up of this project is available on Substack:

Designing AI That Reduces Regret in High-Stakes Commerce